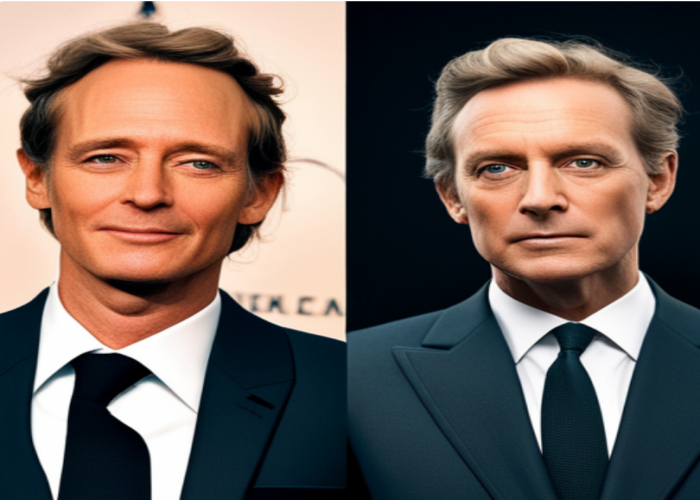

In times we have witnessed a progression, in Artificial Intelligence (AI) technology enabling us to produce digital content that appears remarkably authentic. One such technological advancement is Deepfake — an AI technique for generating audio, videos, or images that possess a genuine quality. As AI systems become increasingly sophisticated the rapid development of Deepfake technology has given rise to a set of concerns and risks.

It is crucial for individuals involved in the field of AI including technology professionals, data scientists, researchers, and students alike to comprehend the factors contributing to the emergence of Deepfake technology. Moreover understanding its implications and associated risks is equally important. This blog post strives to provide a captivating insight into this subject matter.

Deepfake technology is built upon two AI techniques; Generative Adversarial Networks (GANs) and Autoencoders. Here’s a brief overview:

Generative Adversarial Networks (GANs): This approach employs two AI models. One is known as the generator which produces fabricated data and another is known as the discriminator which attempts to distinguish between fake data. Over time the generator becomes adept, at creating fakes that can deceive even the discriminator.

Autoencoders: Neural networks are specifically trained to replicate input data. In the case of deepfakes, they are employed to compress images or videos into a form that still retains the essential features of the original content. This condensed data is then reconstructed to create an image or video essentially mimicking the original.

Understanding these techniques gives us insight, into the underlying principles of Deepfake technology. However, it’s important to note that as AI advances the methods used for creating fakes, could potentially result in more convincing and challenging to detect deepfakes.

Deepfake technology while remarkable from a standpoint raises ethical concerns:

Misrepresentation & Deception: The primary concern with deepfakes lies in their ability to convincingly portray individuals saying or doing things they never actually did. This can greatly infringe on an individual’s right to their image and reputation.

Privacy Violation: The technology often utilizes images and videos without obtaining consent, from the individuals involved. This unauthorized use of data raises privacy issues.

Spread of Misinformation: Deepfakes have the potential to be used for spreading information generating news and fueling disinformation campaigns. The potential consequences of this are quite concerning, in the realm of politics. Deepfakes have the ability to sway opinion. Even influence election outcomes, which is alarming.

Cybersecurity Threats: One area where deepfakes pose a cybersecurity threat is in phishing scams. These scams could involve using a fabricated video of someone to deceive victims into sharing information.

Legal Challenges: Another challenge lies in the legal domain. Current laws may not adequately address the issues brought about by deepfakes leaving accountability for those who misuse this technology.

To sum it up while deepfake technology can have applications like entertainment or historical reenactments it is crucial to establish regulations and sophisticated detection methods. Failing to do so could result in reaching ethical implications that can cause harm. It’s essential for lawmakers, tech companies, and society, as a whole to fully grasp these implications and take measures to manage them appropriately.

The risks associated with deepfake technology are multi-faceted and have serious implications across various sectors. At the core, these dangers emerge from the realistic and convincing artificial videos or images that deepfakes generate, often misleading viewers into believing in the authenticity of such content.

Authenticity Breach: As artificial intelligence is employed to create deepfakes it poses a challenge, to the traditional notion of authenticity. The belief in what we see is no longer reliable as deepfakes have the ability to convincingly fabricate individuals engaging in actions they never actually performed, leading to an erosion in trust when it comes to content.

Violations of Privacy: Deepfakes bring forth concerns regarding privacy. With a handful of available images or videos, deepfake technology can recreate and manipulate someone’s appearance or voice opening the door for potential misuse and intrusion into their private lives.

Political Disruption: The political landscape is particularly susceptible to the impacts of deepfakes. These advancements can generate fabricated speeches or actions that have the potential to sway opinion create unrest and even influence election results.

Legal Challenges: One of the obstacles in combating deepfakes lies in the absence of legislation specifically addressing this technology. The lack of framework means that individuals who maliciously create or utilize deepfakes often face legal consequences.

Given these risks, it becomes crucial to develop defense mechanisms against deepfakes. This could manifest through detection algorithms, strict regulations, or public awareness campaigns highlighting the dangers associated with deepfake technology. Without measures in place, the widespread availability and increasing sophistication of this technology could pose threats to individual security as well as our society and nation, as a whole.

To combat the risks and potential misuse of deepfake technology there are strategies that can be implemented;

1. Raising Awareness: It is crucial to educate the public about the existence and potential dangers of deepfakes. By providing people with knowledge, about deepfakes, we can cultivate a discerning audience who are less likely to fall victim to deceptive content.

2. Detection Technology: Investing in algorithms for detection is a countermeasure. By utilizing machine learning and AI we can develop technologies for identifying deepfakes by analyzing cues such as lighting, shadows, or inconsistencies in facial movements that often go unnoticed by humans.

3. Regulation: Implementing frameworks can help prevent the misuse of deepfake technology. This could involve enacting laws that specifically criminalize the use or mandating the disclosure of manipulated content created through deepfake technology.

4. Authentication: Another promising solution involves utilizing media authentication techniques. Having a system in place that verifies and certifies the authenticity of content at its creation establishes a chain of custody making it more difficult for deepfakes to go undetected.

5. Collaboration: Fostering collaboration plays a role, in combating deepfakes effectively. By working across countries and industries we can combine resources, exchange insights, and coordinate responses to effectively address this challenge.

In conclusion, it is important to acknowledge the risks associated with the misuse of deepfake technology. However, by taking an approach that includes raising awareness implementing detection technology enacting regulations establishing authentication measures, and fostering collaboration we can effectively combat these threats and create a safer digital environment.

In closing the emergence of deepfake technology brings both possibilities and concerns. On one hand, it has the potential to revolutionize fields, like entertainment and personalized advertising. On the other hand, it also poses risks to personal privacy, security, and democratic processes.

Awareness: Increasing awareness about deepfakes within society is crucial in protecting ourselves from these risks. By enhancing people’s understanding of deepfakes existence and implications we empower individuals to question and critically evaluate content.

Technology: Furthermore leveraging advancements in machine learning and artificial intelligence plays a role in this fight against deepfakes. Developing technologies that can detect cues often overlooked by humans will enable us to differentiate between deepfakes and authentic content.

Regulations: Implementing regulations that govern the usage of deepfake technology is another aspect of addressing this issue. These regulations should encompass aspects such as creation guidelines, distribution restrictions, and penalties, for use. We should strongly advocate for regulations to address the misuse of deepfake technology. Enacting laws that criminalize the use of deepfakes and mandate the disclosure of manipulated content can offer legal remedies.

Proof of Authenticity: Implementing media authentication techniques can help verify and certify the genuineness of content, from its creation point establishing a traceable digital chain of custody that makes it more difficult for deepfakes to go undetected.

Collaborative Efforts: International cooperation plays a role in combating the threat posed by deepfakes. By pooling resources sharing insights and coordinating responses we can effectively tackle this challenge.

In summary, countering the risks associated with deepfake technology necessitates a multifaceted approach. It is, through raising awareness, technological advancements, regulatory frameworks, authentication techniques, and international collaboration that we can aspire to mitigate the dangers posed by deepfakes and promote an environment.

1 Comment

Explainable AI (XAI) : Making AI transparent | Dishu BansalAugust 30, 2023

[…] and other LLMs, We are now even flooded with AI-generated content, including ultra-realistic deepfakes. As many as the benefits of AI may be, one of the biggest limitations right now is not supporting […]